What does it take to win a data competition?

Showcasing the three winners of the Uniswap 12 Days Of Dune competition, and explaining what they did well.

Our competition and course covering Uniswap in 12 days is now complete! There have been hundreds of analysts who have completed the course, and we had over a dozen quality submissions to the competition. Thanks again to the Uniswap Foundation for co-hosting the competition.

Check out the thread below for a refresher on the course:

The competition question was open ended, asking for analysts to come up with creative ways of measuring the percentage of pairs that were high or low quality.

Our three winners were:

@0xmandy22 (submission), concluded that 52 USDC pairs are high quality (1.8%)

@dumbcryptoape (submission), concluded that only 3 WETH pairs (USDC, USDT, and WISE) are high quality.

@The_Douglas_Fir. (submission), concluded that only 3 DAI pairs (USDC, USDT, and WETH) are high quality.

Congrats to you all! Now, let’s walk through what made their submissions high quality.

Creating a Winning Submission

You’ll find that the winning submissions all have cohesive storytelling, clear visualizations, and contextualized queries.

Cohesive Storytelling:

Each winner chose a different medium to explain their findings: in-dash commentary, a thread, and an article.

With 0xmandy22’s dashboard, they kept all chart descriptions very concise with just the reasoning and takeaway. It’s easy to get distracted looking around at a dashboard, so this helps a lot with flow.

With dumbcryptoape’s thread, they kept the narrative engaging with memes and contextual comparisons, like how the price impact curve looks exactly like the constant product curve (xy=k)

With the_douglas_fir’s article, they dove deeper into explaining methodologies and interpretations, such that we can get more out of the dashboard than just trying to figure it out ourselves.

If you can do all three, then that will give you the best results across an array of audiences. I try to do that with my dashboards, for example the quickstart dashboard came with an article/video and a thread.

Clear Visualizations:

Charts need to be easily readable and digestible. One of the main difficulties of this challenge was the sheer number of pairs to visualize (thousands at a time), as well as the number of variables involved.

0xmandy22 handled this particularly well, by filtering out the number of pairs to visualize at each step.

dumbcryptoape set a higher minimum reserve (2000 WETH) to reduce the number of pairs by a large percentage from the start.

An easy improvement for these charts would have been to create a horizontal or vertical constant line to show exactly where your cutoff is for each threshold.

Contextualized Queries:

It’s good to always assume the reader has almost not background context (either technical or historical) on the topic. Both dumbcryptoape and the_douglas_fir brought time series analysis in to back up their point-in-time comparison queries:

dumbcryptoape and douglas included time series on TVL, which more generally shows up stability of reserves over time for different pairs (and as a whole for the protocol).

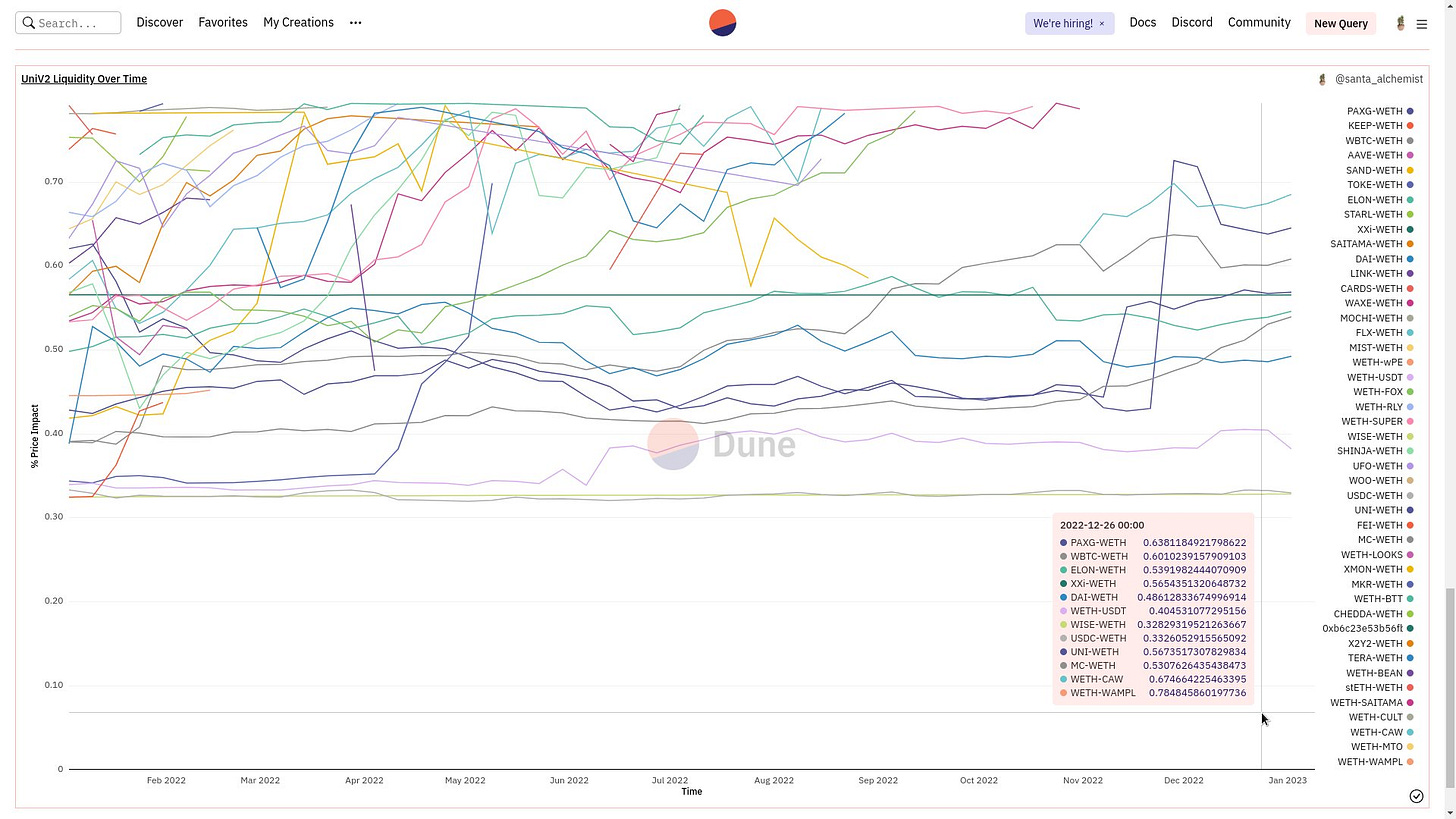

dumbcryptoape showed Price impact over time, instead of only using a cumulative average or standard deviation metric. Though it could be improved by only showing a certain number of filtered pairs so the lines are less messy.

It’s good to also have a mix of tables and charts, since tables are much more searchable and can link to other helpful resources like Etherscan. There was a general lack of table usage across submissions.

Hopefully, you’ve learned something here that you can apply to your next analytics task. Great job to these wizards once again!

Continuing Your Learning Journey

While we’ll have many more courses, competitions, and bounties in the future - there are also two new initiatives starting this week:

The first is a Bitcoin focused group, where you’ll get to work together with me and other top analysts like Hildobby, Kofi, and Jackie to dive deep into protocol metrics (steps to join).

The second will be announced on Friday - make sure to subscribe so you don’t miss it :)